Available exclusively to fintech founders, executives, and investors.

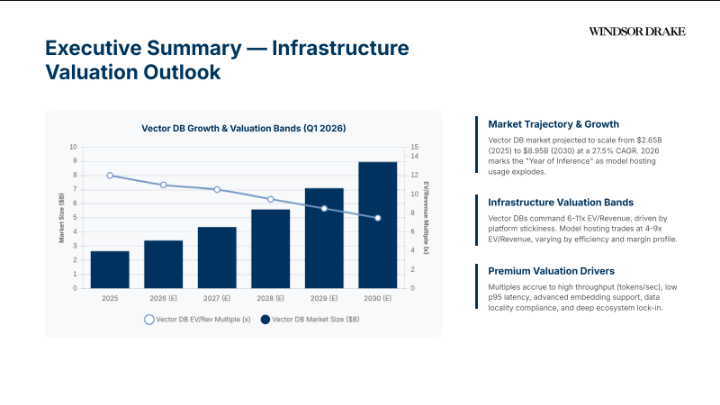

2026 is the year inference spend overtakes training. The money isn’t in building models anymore—it’s in serving them. The market is projected to scale from $2.65 billion in 2025 to $8.95 billion by 2030, a 27.5% CAGR that reflects a fundamental shift in where enterprise AI budgets flow.

The infrastructure stack has matured. We’re no longer debating which embedding model to use. We’re optimizing for throughput, latency, and cost per token. Vector databases are becoming the “long-term memory” for enterprise LLM applications. Model hosting platforms are abstracting infrastructure complexity to accelerate time-to-market. This isn’t about features anymore. It’s about reliability, efficiency, and compliance.

The valuation spread is clear. Vector databases command 6-11x EV/Revenue, driven by platform stickiness and data gravity. Model hosting trades at 4-9x EV/Revenue, varying by efficiency and margin profile. Premium multiples accrue to high throughput (tokens/sec), low p95 latency, advanced embedding support, data locality compliance, and deep ecosystem lock-in.

Infrastructure Category | EV/Revenue Multiple | Key Valuation Driver |

Vector Databases | 6-11x | Data gravity, RAG stickiness, enterprise governance |

Model Hosting / Inference | 4-9x | Autoscaling efficiency, developer velocity, observability |

Observability & Data Ops | 6-10x | Telemetry scale, AI-specific monitoring, root cause analysis |

Traditional Data Infra | 4-7x | Migration friction, volume-based pricing, stability |

Vector databases aren’t a niche anymore. They’re infrastructure. Retrieval-Augmented Generation (RAG) is the primary driver, turning vector stores into the “long-term memory” for enterprise LLM applications. Multimodal embeddings—text, images, audio—are expanding the use case beyond simple semantic search.

Serverless architecture is the unlock. Separation of compute and storage plus tiered storage (disk/S3 offload) drastically reduces Total Cost of Ownership (TCO), accelerating adoption. You don’t need to overprovision for sporadic RAG workloads anymore. You scale to zero. You pay for what you use.

Enterprise data governance is driving demand. Compliance requirements—role-based access control (RBAC), data residency guarantees, audit trails—are pushing enterprises toward managed vector solutions. Self-hosting is dying. Managed services with SLAs are winning.

Pinecone is the managed-first leader. $750 million+ valuation. Fully managed, serverless architecture focusing on ease of use and scalability. No self-hosted option creates pure SaaS revenue quality. High revenue multiple driven by consumption-based pricing and strong NRR. Enterprise levers include multi-region availability, separation of storage/compute, 99.99% uptime SLAs, and data sovereignty (BYOC).

Weaviate is the open-source hybrid. Series B growth trajectory. Robust OSS core plus managed cloud service. Strong focus on modularity and multi-modal capabilities. Premium accrued to flexible deployment (K8s, Hybrid Cloud). Enterprise levers include hybrid search (keyword + vector) and modular integrations.

Qdrant is the efficiency play. Rust-based engine emphasizing performance and low resource footprint. Gaining traction for high-throughput ingestion and distributed mode. Valuation upside tied to compute efficiency and cost-per-vector. Enterprise levers include resource efficiency and distributed architecture.

Company | Model & Strategy | Market Signal & Valuation Driver |

Pinecone | Managed-First / Serverless | $750M+ valuation, high NRR, consumption-based pricing |

Weaviate | Open Source + Cloud | Series B growth, flexible deployment, modularity |

Qdrant | OSS Core + Managed | Compute efficiency, cost-per-vector optimization |

Pricing varies widely by deployment tier. Entry-level or OSS self-managed deployments cost roughly $100-$300 per month for 10 million vectors. Production standard (managed services with SLAs, moderate throughput, standard HNSW indexing) runs $500-$1,200 per month. High-performance enterprise (high throughput, multi-region replication, hybrid search, strict p99 latency guarantees) costs $2,000+ per month.

The margin profile is strong. Vector databases achieve 65-75% target gross margins. Storage is vector-intensive with hot vs. cold tiering (memory vs. disk/object), index size & dimensionality, and replication factor & data durability as key cost drivers. This is better than compute (50-65% GM) but requires rigorous tiering management.

The market is bifurcating. Open-source models (Weaviate, Qdrant) leverage community velocity and portability. Proprietary engines (Pinecone) focus on serverless abstraction and ease of scale. Serverless TCO advantage is winning: separation of storage and compute allows granular scaling. Serverless architectures are winning TCO battles by eliminating over-provisioning for sporadic RAG workloads.

Ecosystem integration creates lock-in. Deep hooks into model hosting (Hugging Face) and provider marketplaces (AWS/Azure) create defensibility. Pre-built connectors for LangChain/LlamaIndex reduce implementation friction. Latency at scale (p95 <50ms) and data residency compliance are critical enterprise requirements.

As training consolidates, value shifts to the managed infrastructure layer. Platforms enabling scalable, low-latency, and cost-effective model serving are becoming the critical control points in the AI stack. Managed inference provides serverless API endpoints for production LLMs. GPU orchestration enables dynamic routing and multi-region failover. Developer experience abstracts infrastructure complexity to accelerate time-to-market. Operational efficiency maximizes hardware utilization and performance per dollar.

Performance matters. Critical focus on Time to First Token (TTFT) and throughput. Platforms guaranteeing p95 latency under load command enterprise premiums. Cost efficiency drives adoption. Shift from fixed GPU provisioning to usage-based pricing. Quantization and batching optimizations are key to sustainable unit economics. Security & governance are non-negotiable. Enterprise requirements for data residency, VPC peering, and audit logs are driving adoption of managed hosting over raw infrastructure.

2026 marks the inflection point where inference spend overtakes training. Demand is shifting from heavy training clusters to efficient, distributed serving infrastructure for multi-model workloads. Four key trends are driving this: serverless & spot markets (explosion of abstract compute layers allowing utilization of excess capacity), quantization at scale (aggressive compression enabling production-grade inference on commodity GPUs), smart orchestration (routing layers dynamically selecting models and endpoints based on real-time latency, cost, and accuracy SLAs), and low-latency edge (shift to local and edge inference for privacy-sensitive and real-time applications).

Hugging Face is “The GitHub of AI.” $4.5 billion valuation. Dominates ecosystem mindshare with massive model catalog integration. Valuation reflects platform lock-in potential despite lower compute margins than pure infrastructure plays. Leads in API breadth & integration with native transformers library integration.

Replicate is the usage-based API leader. $58 million+ funding. Focuses on developer simplicity (“one line of code”). Strong adoption for image/video generation workloads. Gross margins improving via cold-boot optimizations. Excels in standardized API surfaces for diverse models.

Modal is the infrastructure-as-code approach. Targets sophisticated ML engineering teams. High technical moat around container startup times and custom runtime environments. Specialized runtimes achieve sub-second cold starts vs. minutes on standard cloud. Provides granular per-function tracing.

Banana is the cost-efficient serverless GPU inference play. Focuses on scaling economics for startups, leveraging spot instances and aggressive autoscaling to compete on price/performance.

Platform | Valuation / Funding | Key Differentiator |

Hugging Face | $4.5B Valuation | Ecosystem lock-in, massive model catalog |

Replicate | $58M+ Funding | Usage-based API, developer simplicity |

Modal | Serverless Infra | Sub-second cold starts, granular tracing |

Banana | GPU API | Spot instances, cost efficiency |

Compute is the largest cost driver. GPU-heavy operations target 50-65% gross margin. Key cost drivers include GPU utilization & autoscaling efficiency, inference batching & quantization, and cold start latency vs. reserved capacity. The challenge is maximizing hardware utilization without sacrificing performance.

Storage is vector-intensive. Target 65-75% gross margin. Key cost drivers include hot vs. cold tiering (memory vs. disk/object), index size & dimensionality, and replication factor & data durability. Smart tiering strategies are critical to maintaining margins.

Network is egress-heavy. Target 70-80% gross margin. Key cost drivers include egress fees (multi-region/multi-cloud), inter-AZ data transfer, and content delivery & edge caching. Proper architecture choices are critical to managing egress costs.

Model optimization levers reduce compute intensity and memory footprint. Quantization (INT8/4) delivers 2-4x memory reduction. Distillation enables smaller student models. KV-caching reuses attention computations.

Infrastructure levers maximize hardware utilization and minimize idle costs. Batching/token streaming enables high throughput. Spot capacity delivers 60-80% cost savings. Aggressive scale-down policies reduce idle GPU burn.

Vector storage tiering optimizes cost at scale. Cold-tiering offloads older/infrequent vectors to object storage (S3), delivering 40-60% savings. Index compaction (PQ/SQ quantization) reduces memory footprint by 4-8x. Hybrid retrieval fetches full vectors only for top-k candidates.

Infrastructure valuation is increasingly tied to efficiency S-curves. As concurrency scales, platforms utilizing batching, quantization, and edge offload demonstrate superior unit economics and latency profiles. Top-tier efficiency benchmark for H100s using advanced batching (vLLM/TGI) achieves 3,500+ tokens/sec per GPU compared to baseline of ~1,200. Critical p95 latency target is <25ms for real-time RAG applications; platforms maintaining this under high concurrency command premiums. Optimized inference COGS range is $0.15-$0.40 per 1 million tokens vs. public API pricing; this is the margin capture opportunity for efficient infrastructure.

Cost Category | Target Gross Margin | Primary Efficiency Levers |

Compute (GPU-Heavy) | 50-65% | Quantization, batching, spot instances, autoscaling |

Storage (Vector-Intensive) | 65-75% | Hot/cold tiering, index compaction, hybrid retrieval |

Network (Egress-Heavy) | 70-80% | Edge caching, multi-region optimization, CDN |

High-velocity deployment wins. Managed endpoints (OpenAI, Anthropic, Cohere) offer immediate scalability and SLAs, driving faster time-to-production for enterprises. Integrated telemetry and “batteries-included” safety tooling justify premium pricing. Revenue quality matters. Investors reward the recurring predictability of managed APIs. High switching costs (prompt engineering lock-in) create defensible moats and >120% NRR profiles.

Control & portability drive adoption. Enterprises choose self-hosted open weights for data privacy, lower long-term TCO at scale, and fine-tuning flexibility. Value capture shifts to the hosting infrastructure and governance layer rather than the model IP itself. TCO dynamics favor scale. At high volumes (>10M tokens/day), self-hosted OSS becomes significantly cheaper than managed APIs, driving a “graduation” behavior that infrastructure providers capitalize on.

Enterprise monetization via add-ons. Open-source entities monetize via “Enterprise Editions” offering RBAC, SSO, and guaranteed support. Valuation anchors on conversion rates from free-tier users to paid seats. Wider adoption funnel drives long-term value.

Most mature enterprises adopt a hybrid posture: Managed models for prototyping/complex reasoning, and fine-tuned OSS models for high-volume, specific tasks. Infrastructure platforms that support both seamlessly command premiums.

TCO advantage & efficiency create economic lock-in. Platforms delivering >30% compute cost reduction via optimization/quantization create hard economic lock-in that overrides pure feature parity. Once you’ve optimized for a specific infrastructure, switching costs are prohibitive.

Regulatory & enterprise certification create high barriers. SOC2, HIPAA, FedRAMP, and sovereign cloud deployments act as high-barrier entry moats against lighter-weight competitors and OSS alternatives. The cost and time to achieve these certifications is measured in quarters, not weeks.

Adjacent service lock-in compounds stickiness. Integration of vector storage, inference, and observability creates compound stickiness. Telemetry data scale improves model performance over time. The more data you ingest, the better your platform becomes, creating a flywheel effect.

Data locality & sovereign cloud requirements drive premium pricing. Enterprise buyers demand strict data residency controls and private link connectivity for inference endpoints. Platforms offering region-specific hosting and VPC deployments command 20-30% pricing premiums.

Telemetry data creates proprietary advantages. The scale of telemetry data ingestion enables training superior defensive AI models. This proprietary infrastructure core becomes an unassailable moat. Network effects drive integration depth. Marketplace positioning and deep hooks into provider ecosystems reduce implementation friction and increase switching costs.

Assets demonstrating low-latency p95 performance (<20ms for vector DBs, <25ms for inference), high throughput tokens/sec, and strong Net Revenue Retention (>120%) via expansion command top-tier multiples. Platforms with 99.99% uptime SLAs, multi-region availability, and proven autoscaling efficiency sustain premiums.

Platforms with solid developer adoption but standard efficiency metrics. Value anchored by ecosystem integrations and ease of use rather than pure technical superiority. Managed services with SLAs and moderate throughput fall into this range.

Services-heavy revenue mix (>30%), high compute COGS dragging gross margins below 50%, or lack of enterprise-grade security/compliance features. Point solutions without broader platform integration face displacement risk and valuation compression.

Factor | Premium Drivers | Valuation Drags |

Performance | p95 latency <20-25ms, high tokens/sec throughput | Poor autoscaling, high cold-start times |

Unit Economics | Gross margins >65%, efficient batching & quantization | Compute COGS >50%, poor GPU utilization |

Enterprise Readiness | SOC2, HIPAA, FedRAMP, data residency, VPC peering | Lack of compliance certifications, poor SLAs |

Revenue Quality | NRR >120%, software-based, consumption pricing | Services-heavy (>30%), weak retention |

Ecosystem Lock-in | Deep integrations, marketplace positioning, telemetry scale | Commodity features, open-standard risks |

Shift from raw performance to TCO. Focus on tiered storage (hot/cold), index compaction, and recall-aware tiering to manage billion-scale vector costs. The market is maturing. Buyers care about efficiency, not just capability.

Quantization (INT8/4), specialized compilers, and kernel optimizations driving down cost-per-token. Efficiency becomes the primary valuation lever for hosting platforms. The race to optimize cost per token is the new competitive battleground.

Sovereign clouds and VPC deployments gaining premiums. Enterprise buyers demand strict data residency controls and private link connectivity for inference endpoints. Compliance isn’t optional—it’s a premium multiplier.

Bifurcation of workloads: massive models in cloud clusters vs. distilled SLMs on edge devices. Infrastructure supporting hybrid orchestration captures high-value industrial use cases. Edge inference is no longer a future state—it’s operational reality.

Relentlessly optimize cost-per-token and p95 latency. Technical efficiency is the primary driver of valuation premiums and competitive differentiation in 2026. Move beyond raw inference by expanding managed features and offering multi-cloud or sovereign deployment options to widen the enterprise TAM. Prove ecosystem lock-in by evidencing strong Net Revenue Retention (NRR) and service attach-rate lift to justify upper-quartile multiples.

Technical validation is critical. Diligence historical telemetry scale and SLO adherence. Verify p95 latency and autoscaling behavior under load. Don’t trust the deck—test the system. Financial & supply resilience matter. Validate TCO projections against hardware deflation curves. Ensure contract flexibility for potential GPU supply shocks. Governance & risk assessment is mandatory. Scrutinize data governance frameworks and sovereign capabilities. Assess proprietary API stickiness vs. open standard risks.

Continued bifurcation: Pure-play performance engines vs. integrated enterprise platforms. Mid-market generalists face consolidation pressure. Winning profile: Platforms that marry technical efficiency (lowest cost/token) with enterprise-grade SLAs, observability depth, and robust ecosystem integrations.

The 2026 market places a distinct premium on efficient, enterprise-ready, and compliant infrastructure. Governance, data locality, and TCO optimization are now critical valuation drivers. Vector databases are maturing into comprehensive enterprise data platforms. The shift to serverless models is significantly expanding the Total Addressable Market (TAM) by lowering adoption barriers.

Inference platforms win through reliability, robust telemetry, and ecosystem lock-in. Winners provide seamless scaling and enterprise-grade observability that justify long-term commitments. Efficiency is value. Technical efficiency metrics—specifically tokens/sec, latency, and resource utilization—directly drive valuation multiples. High-performance infrastructure commands premium pricing in the 2026 market.

The next wave of value capture belongs to infrastructure that turns model intelligence into reliable, cost-predictable enterprise services.

©2026 Windsor Drake